About Me

Basics:

I am an assistant professor of Computer Science at Cornell University. I specialize in computer graphics, computer vision, human-computer interaction (HCI), as well as a random sampling of other topics.

My Research Group Page

Research Video Channel

My CV

I tend to update my research group page more frequently than this one, but you can find my CV and other more random or personal stuff here.The Before Times:

I completed my PhD at MIT in 2016, where I was advised by Fredo Durand, then worked as a postdoctoral researcher at Stanford University with Maneesh Agrawala until 2019, followed by a 1-year postdoc at Cornell Tech in NYC. I am now an assistant professor of computer science at Cornell University in Ithaca, NY.

Current office hours by appointment. Including "?Office Hours?" in the title of your email might help me see it.

Interested in joining the group?

More info can be found on my Joining The Group page.

Update: Fall 2023 (SIGGRAPH, ICCV, CHI, UIST, etc…)

I clearly don’t update this website as much as I should. I have added new papers to the Publications tab. I will try to launch a group website as soon as I can to better feature the students who are working on these projects. Stay tuned.

Update SIGGRAPH 2021: A Mathematical Foundation for Foundation Paper Pieceable Quilts

Our work on the geometry of paper piecing quilt designs, with first author Mackenzie Leake was published at SIGGRAPH 2021!

Update CVPR 2020 Oral: Visual Chirality Nominated for Best Paper at CVPR2020

Our work on Visual Chirality, with Zhiqiu Lin, Jin Sun, and Noah Snavely is an oral at CVPR, and one of the nominees for best paper! Check out the webpage and video to learn more about the work.

Update ECCV 2020 Oral: Crowdsampling the Plenoptic Function

Our work on crowdsampling the plenoptic function was selected for ECCV Oral! Learn from Internet photos to create interactive space-time views of popular attractions! With self-supervision!

Work with Zhengqi Li, Wenqi Xian, Noah Snavely. Check out the webpage and video to learn more.

Select Research Highlight Videos

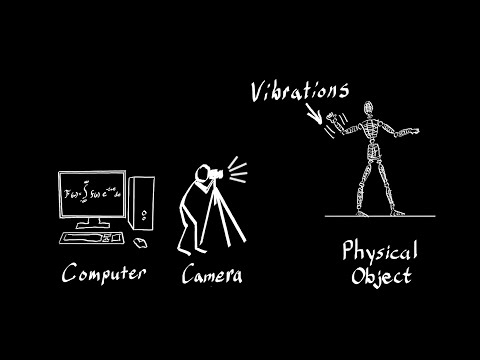

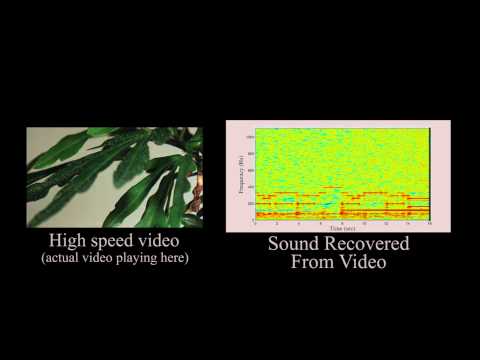

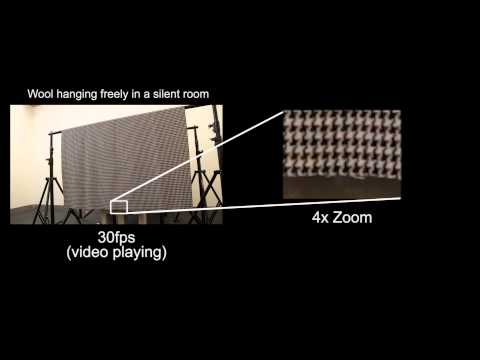

I work on a range of topics in graphics, vision, and HCI, with most of my research focusing on how to apply work in these fields to new problems and application spaces. Below you will find a sampling of videos that describe different projects I've worked on. For a longer list of my publications, see my publications page or CV. My TED 2015 talk is also a good introduction to my work on visual vibration analysis.

Click on the thumbnails below to see videos summarizing different research projects. More can be found on my publications page.

Speaking at TED 2015

Updates

Winter 2019

- Check out the Wired article discussing some of our work on computational video editing.

- I will be serving on the papers committee for SIGGRAPH Asia 2019.

Summer 2018:

- Check out our online demo of results from our SIGGRAPH 2018 paper on Visual Rhythm and Beat.

- Code for the project can be found on my Github page.

Visual Rhythm & Beat Results

[Project Page]

Abstract:

We present a visual analogue for musical rhythm derived from an analysis of motion in video, and show that alignment of visual rhythm with its musical counterpart results in the appearance of dance. Central to our work is the concept of visual beats — patterns of motion that can be shifted in time to control visual rhythm. By warping visual beats into alignment with musical beats, we can create or manipulate the appearance of dance in video. Using this approach we demonstrate a variety of retargeting applications that control musical synchronization of audio and video: we can change what song performers are dancing to, warp irregular motion into alignment with music so that it appears to be dancing, or search collections of video for moments of accidentally dance-like motion that can be used to synthesize musical performances.

Visual Rhythm And Beat (Overview Video)

A video giving an overview of the project and what we can do.

Spot The Dancing Robot

Remix of Spot The Dancing Robot from Boston Dynamics.

Psy's Best Friend

This example was a lot of fun to create. I found this Youtube channel for Dancing Nathan – a dog that does this strange flailing trick on a chair, which kind of looks like off-beat dancing.

Kitty Shake Shake It

Cats dancing. Enough Said.

If you're happy and you know it

The owners of the coffee shop I regular have a todler who is often watching this show when I go to get my coffee. They also like rap music, so I made this.

Barney Don't Need No Education

Barney and Friends retimed to “Another Brick in the Wall” by Pink Floyd.